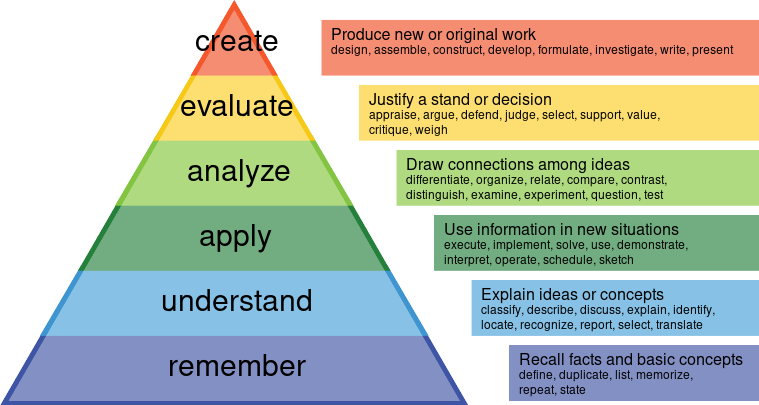

Bloom’s Taxonomy is one of my favorite models for how we learn.

The big idea here is that, as we learn, we build on each lower layer of the pyramid towards higher order functions.

It is a pretty standard way of teaching things. First through repetition, we remember concepts. Eventually we can apply our understanding and answer questions. Finally we can create, building upon all the lower levels.

Often we use the word pedagogy to refer to the study of how we learn things.

This blog post is about a kind of AI-induced pedagogical shift, in particular when it comes to software.

AI Has Inverted The Pyramid

With AI (LLMs), who cares about remembering when you can instantly create!

Whether you ask ChatGPT for an essay on “the pros and cons of slavery”, or ask Midjourney for a “cat destroying a city in the form of a picasso”, or ask Claude for “a todolist app for cats”, you can now instantly create things, without going through the boring intermediate steps of learning.

I’m not the first person to recognize this, Michelle Kassorla wrote about this a year ago. I’m going to write my own blog post anyway, because I would like to focus on software engineering instead of general pedagogy.

What Does It Mean To Create?

My main thesis here is that, for software engineering, creating software is actually the process of building up a theory for why we are building something and how it connects to some real-world problem.1

Are we “co-creating” with our LLMs? Are we just using super-charged tools?

With software, it is BOTH.

What is really important to me, is that we are hitting the top of the pyramid, without being forced through the intermediate layers.

In a lot of ways, this is awesome (in a school setting)!

But is it sustainable (in the software engineering realm)?

What Is The Important Part of “Creation”, When It Comes To Software?

I’ve talked about this subject previously, but the idea that young whipper-snappers are skipping steps without true understanding is NOT new.

It has been happening every decade as computers become more and more abstract:

- Assemblers creating machine code without humans understanding it

- Compilers creating machine code without humans understanding assembly

- Interpreters creating programs without humans understanding… C.

- Developers creating programs without understanding the language at all!!!

Isn’t It The Same This Time?

When I’m on the producing side (using LLMs to generate code), I feel like it is exactly the same as these other revolutions.

We are raising the level of abstraction here, I don’t even need to look at the generated code, just like I don’t need to check the Assembly/Binary that gcc outputs.

Right?

Heck, who cares what language the software is in anyway!

I don’t know Assembly, but I can still write some C code.

gcc knows how to output Assembly, so I don’t have to!

Even if I did know say, Rust, I’m sure Claude can write better and more idiomatic Rust than I can anyway.

Using LLMs is just like any other advancement in our software engineering tools.

Is It Different This Time?

When I’m on the receiving side (code reviewing, or living with someone else’s LLM-generated code), I feel the opposite way.

I think it is different this time, that the pyramid has been flipped, and that this is bad. Creating things without understanding is a recipe for software disasters.

When someone has some work in a code review, I insist that it is their code, NOT Claude’s.

They have to own it, because we have to live with it.

This is different than in school. In school your design decisions don’t compound. You don’t have to be oncall for your projects at school.

What Does The Pyramid Have To Do With Anything?

Which is it? Who cares about the pyramid?

My point here is that, software engineering is BOTH:

- A pedagogical exercise: we are always learning new things as we build systems

- An engineering exercise: we are honing craftsmanship, ownership, and balancing sustainability with every line of code we write

The pyramid inversion, I would argue is not a problem at school. Bloom never meant for it to be prescriptive anyway! It was only meant to be descriptive of how people learn. It is OK to learn things in a new way with AI.

The pyramid inversion, is a problem at work (in a software engineering field). It is a problem because, fundamentally, you can’t be a good engineer if you don’t understand what you are creating!2

For junior engineers, this can be fine, because we expect senior engineers to be present, somewhere, to help with the collective theory building. But if junior engineers are not using AI to learn, and only using it as an abstraction lift, then we are in trouble.

My Point (For Professionals)

My point here is that LLMs MUST be thought of as a learning partner in software engineering to be effective.

They are NOT a deterministic and correct compiler where you can ignore its output as an “implementation detail” of your build.

Your creation, even if just literally the files Claude outputs, must be part of your theory building.

If it isn’t, that is fine, as long as you are not on call for it.

My Point (For Students)

For students, using LLMs to create can be a great way to learn!

But you have to be brutally honest about the intellectual activity you are undertaking.

If you start with the top of the pyramid (Creation), that is fine, but if you don’t work your way down the layers of the pyramid, all the way to Remembering, then you won’t be learning anything.

-

How AI relates to “Programming as Theory Building” has also been thought about before, see this blog post. The problem is that this blog post was written in the distant past of … 2025 (one year ago now). ↩︎

-

Of course, computers are fractal of complexity. When I say “understand”, I don’t mean nand2tetris. I mean only first-level understanding of your (Claude’s) work output, not all the way down to the electrons. ↩︎